Layer N: Hyper Performant and Hyper Composable Execution. An Interview with Co-Founder David Cao

Summary

TLDRIn this engaging discussion, David Chow, co-founder of Layer n, shares insights on their cutting-edge Layer 2 blockchain solution designed to tackle scalability issues in the crypto industry. Chow discusses the evolution of Layer n, its unique features like the statet communication protocol, and the potential of AI and crypto intersection. Exciting partnerships and the future of Layer n are also highlighted, emphasizing the project's commitment to enhancing on-chain capabilities and user experience.

Takeaways

- 🚀 Layer n is a hyper performant, scalable layer 2 blockchain solution designed to tackle scalability issues in the crypto industry.

- 🌐 David Chow, co-founder of layer n, shares his journey from bioinformatics research at Harvard to building high-frequency trading systems on blockchain.

- 🛠️ Layer n's core innovation is the creation of a unique network of rollups with custom VMs that allow for exponentially more compute for applications while retaining seamless composability.

- 🔍 The platform aims to achieve feature and performance parity with centralized systems, but without sacrificing the core benefits of decentralization, such as permissionlessness and censorship resistance.

- 🤝 Layer n has gained backing from renowned players in the crypto space, including Peter Teal's Founders Fund, highlighting its potential impact on the industry.

- 🔗 The statet concept within layer n enables applications and rollups to share a standardized messaging pipeline protocol, facilitating seamless and instant communication between them.

- 💡 Layer n's approach to scalability involves a modular stack, with layer n focusing on the execution layer, Ethereum providing security, and partnered data availability solutions like IGDA.

- 🚧 The development of layer n is at an exciting stage, with major strategic partnerships and announcements expected in the near future, including a focus on integrating AI into on-chain applications.

- 🔄 The platform's unique Inter-VM Communication (IVC) protocol allows for the movement of assets between rollups and applications without the need for withdrawal periods or third-party bridges.

- 📈 Layer n's testnet has demonstrated the capability to achieve over 100k TPS in a closed test environment, showcasing its potential for high-performance trading and order book applications.

- 🌟 The future of layer n is promising, with a focus on practical engineering solutions, future-proof modularity, and a commitment to trustless, decentralized systems.

Q & A

What is Layer n and what are its core objectives?

-Layer n is a hyper performant, scalable layer 2 blockchain designed to tackle scalability issues in the crypto industry. Its core objectives include increasing the surface area of what's possible to build on chain by 10x and enabling more compute for application developers, thereby allowing the creation of complex applications without worrying about computational constraints.

How does Layer n approach the issue of scalability differently from other blockchain projects?

-Layer n approaches scalability by introducing a new model of execution layers and virtual machines (VMs). It focuses on providing unbounded compute surface area for application developers and allows for the creation of custom VMs optimized for specific use cases, leading to significant improvements in performance and efficiency.

What is the significance of the partnership with IG-DAO for Layer n?

-The partnership with IG-DAO is significant for Layer n as it provides a solution for data availability, which is crucial for the functioning of Layer n's rollups. IG-DAO enables high bandwidth and storage, which are essential for handling the large volume of transactions that Layer n aims to process.

Can you explain the concept of zero-knowledge fraud proofs as mentioned in the script?

-Zero-knowledge fraud proofs are a mechanism used by Layer n to ensure security and integrity of the transactions. Instead of running validity proofs on every single transaction, which can be expensive and time-consuming, zero-knowledge fraud proofs only require a validity check when a fault is detected. This method reduces the cost and time required for validation while maintaining a high level of security.

What are the benefits of using custom VMs in Layer n's architecture?

-Custom VMs in Layer n's architecture allow for the creation of application-specific execution environments that are highly optimized for their intended use cases. This results in better performance, more efficient use of resources, and the ability to handle complex computations that general-purpose VMs may struggle with.

How does Layer n plan to address the challenge of liquidity fragmentation across different rollups?

-Layer n addresses the challenge of liquidity fragmentation by implementing a shared communication protocol known as the inter-VM communication protocol. This protocol allows different rollups and applications within the Layer n ecosystem to communicate and share liquidity seamlessly, eliminating the need for third-party bridges and reducing the complexity for users and developers.

What is the current development stage of Layer n and what can we expect in the near future?

-Layer n is at an advanced stage of development with a public test net and subsequent mainnet launch planned. In the near future, Layer n will be announcing partnerships with major liquidity providers and is working on integrating AI use cases into its platform, aiming to provide tangible applications beyond infrastructure.

What are the security considerations for Layer n's architecture?

-Security is a key consideration in Layer n's architecture. Despite off-chain data availability solutions introducing a subset of Ethereum's security, Layer n maintains a strong security posture through its use of zero-knowledge fraud proofs and its partnership with IG-DAO. This ensures that the system remains decentralized and trustless while achieving high performance.

How does Layer n's approach compare with other layer 2 solutions like Optimism, Arbitrum, and others?

-While Layer n shares the vision of decentralized applications with other layer 2 solutions, it differentiates itself through its focus on modularity, custom VMs, and a shared communication protocol. Layer n aims to provide a seamless and high-performance environment for developers and users, with a future-proof design that can adapt to new technologies and research findings.

What are the key features of the Nord VM developed by Layer n?

-The Nord VM is a trading and order book-specific virtual machine developed by Layer n. It is designed to handle tens of thousands of trades per second, offering performance on par with centralized exchanges but with the added benefits of on-chain settlement and trustlessness.

What are some of the strategic partnerships Layer n has announced or is planning to announce?

-Layer n has announced a partnership with SushiSwap for the development of a hyper-performance order book. Additionally, they are planning to announce partnerships with major liquidity providers, which will help bring more users and assets to their platform.

Outlines

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифMindmap

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифKeywords

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифHighlights

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифTranscripts

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифПосмотреть больше похожих видео

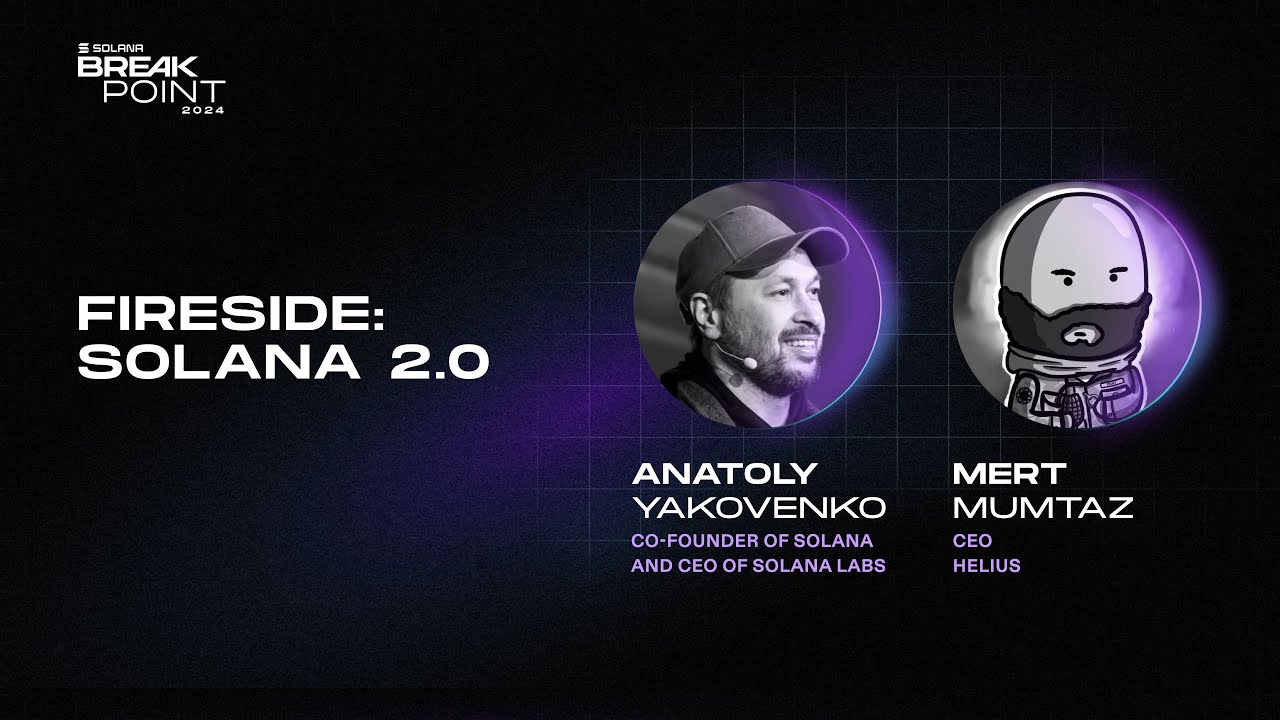

Breakpoint 2024: Fireside: Solana 2.0 (Anatoly Yakovenko, Mert Mumtaz)

Mengenal Lapisan Blockchain: Layer 0, Layer 1, Layer 2!

What is Blockchain Layer 0, 1, 2, 3 Explained | Layers of Blockchain Architecture

Ethereum Layer 2 Solutions Explained: Arbitrum, Optimism And More!

Kaspa Has It Peaked or is May 5th Just The Beginning

Blockchain Scalability solutions How to build decentralised exchanges

5.0 / 5 (0 votes)