DynamoDB: Under the hood, managing throughput, advanced design patterns | Jason Hunter | AWS Events

Summary

TLDRThis video script delves into the inner workings of Amazon DynamoDB, exploring its partitioning scheme, data replication across availability zones, and the mechanics of read and write operations. It discusses consistency models, Global Secondary Indexes, and throughput capacity, offering practical use cases and design patterns for optimizing NoSQL database performance. The talk also covers advanced features like DAX, transactions, parallel scans, and the new Standard-Infrequent Access table class for cost-effective data storage.

Takeaways

- 🔍 **Deep Dive into DynamoDB**: The talk focuses on understanding the inner workings of Amazon DynamoDB, exploring how it delivers value and operates under the hood.

- 📦 **Data Partitioning**: DynamoDB uses a partitioning system where data is distributed across multiple partitions based on the hash of the partition key, ensuring efficient data retrieval and storage.

- 🔑 **Consistent Hashing**: The hashing of partition keys determines the allocation of items to specific partitions, which is crucial for data distribution and load balancing.

- 💾 **Physical Storage**: Behind the logical partitions, DynamoDB replicates data across multiple servers and availability zones to ensure high availability and durability.

- 🌐 **DynamoDB's Backend Infrastructure**: The service utilizes a large number of request routers and storage nodes that communicate via heartbeats to maintain data consistency and handle leader election.

- 🔍 **GetItem Operations**: Retrieving items can be done through either strong consistency, which always goes to the leader node, or eventual consistency, which can use any available node.

- 🔄 **Global Secondary Indexes (GSIs)**: GSIs are implemented as separate tables that require their own provisioned capacity and can be used to efficiently query data based on non-primary key attributes.

- ⚡ **Performance Considerations**: The design of the table schema, such as the use of sort keys and GSIs, can significantly impact performance and cost, especially for large-scale databases.

- 💡 **Optimizing Data Access**: The script suggests using hierarchical sort keys and sparse GSIs to optimize access patterns and reduce costs, catering to different query requirements.

- 🛠️ **Handling High Traffic**: For extremely high-traffic scenarios, such as during Amazon Prime Day, DynamoDB Accelerator (DAX) can be used to cache and serve popular items quickly.

- 🔄 **Auto Scaling and Partition Splitting**: DynamoDB automatically scales and splits partitions to handle increased traffic and data size, ensuring consistent performance without manual intervention.

Q & A

What is the main focus of the second part of the DynamoDB talk?

-The main focus of the second part of the DynamoDB talk is to explore how DynamoDB operates under the hood, providing insights into the database's internal workings and explaining the value delivered in the first part.

How does DynamoDB handle partitioning of data?

-DynamoDB uses a partition key to hash data items and distribute them across different physical partitions. Each partition is responsible for a section of the key space and can store an arbitrary number of items.

What is the purpose of hashing in DynamoDB's partitioning scheme?

-Hashing is used to determine the partition in which a data item should be stored. It takes the partition key, runs it through a mathematical process, and produces a fixed-length output that falls within a specific partition's range.

How does DynamoDB ensure high availability and durability of data?

-DynamoDB replicates each partition across multiple availability zones and hosts. This replication ensures that if an issue occurs with an availability zone or a host, there are still working copies of the data, making DynamoDB resilient to failures.

What is the difference between strong consistency and eventual consistency in DynamoDB reads?

-Strong consistency ensures that the most recent data is always read by directing the request to the leader node. Eventual consistency, on the other hand, can direct the request to any of the nodes, which may result in slightly stale data but at a lower cost.

How are Global Secondary Indexes (GSIs) implemented in DynamoDB?

-GSIs are implemented as a separate table that is automatically maintained by DynamoDB. A log propagator moves data from the base table to the GSI, and GSIs have their own provisioned capacity or can be in on-demand mode.

What are Read Capacity Units (RCUs) and Write Capacity Units (WCUs) in DynamoDB?

-RCUs and WCUs are the units of measure for the throughput of DynamoDB tables. RCUs represent the capacity to read data, while WCUs represent the capacity to write data. They can be provisioned or on-demand, with different pricing and performance implications.

What is the maximum size of an item that can be stored in a DynamoDB table?

-The maximum size of an item in a DynamoDB table is 400 KB. If more data needs to be stored, it should be split into multiple items.

How does DynamoDB handle scaling and partition splitting?

-DynamoDB scales by partitioning. Each physical partition supports up to 1,000 WCUs or 3,000 RCUs per second. If a partition's size grows beyond 10 gigabytes or experiences high traffic, it will automatically split to maintain performance.

What is the role of the burst bucket in DynamoDB's provisioned capacity mode?

-The burst bucket in DynamoDB's provisioned capacity mode allows for temporary spikes in traffic above the provisioned capacity by storing unused capacity tokens from previous time intervals, enabling higher throughput for short durations without incurring additional costs.

What is the significance of having a good dispersion of partition keys in DynamoDB?

-A good dispersion of partition keys ensures that the read and write load is evenly distributed across different storage nodes, preventing any single node from becoming a bottleneck and maintaining overall performance and scalability.

How can DynamoDB's auto scaling feature be beneficial for handling varying workloads?

-Auto scaling in DynamoDB adjusts the provisioned capacity up and down based on actual traffic, within specified minimum and maximum limits. This ensures that the performance is maintained without the need for manual intervention and can handle varying workloads efficiently.

What is the purpose of using a hierarchical sort key in DynamoDB?

-A hierarchical sort key in DynamoDB allows for querying data at different granularities. It enables efficient retrieval of related items, such as all offices in a specific country or city, by structuring the sort key to reflect the hierarchy of the data.

Can you provide an example of how to optimize data storage for a shopping cart in DynamoDB?

-Instead of storing the entire shopping cart as a single large item, it's more optimal to store each attribute, such as cart items, address, and order history, as separate items within the same partition key. This approach allows for more efficient retrieval, update, and cost management.

What is a sparse Global Secondary Index (GSI) in DynamoDB?

-A sparse GSI in DynamoDB is a design pattern where the GSI is not populated with every item from the base table. It's used for specific access patterns where only a subset of items are relevant, reducing the cost and storage overhead of the GSI.

How can DynamoDB Streams be utilized to update real-time aggregations?

-DynamoDB Streams can trigger a Lambda function upon a mutation event, such as an insert or update. The Lambda can then perform actions like incrementing a count attribute, providing real-time aggregations that can be queried efficiently.

What is the significance of the Time-To-Live (TTL) feature in DynamoDB?

-The TTL feature in DynamoDB allows items to be automatically deleted after a specified duration, without incurring any write capacity unit (WCU) charges. This is useful for data that is meant to be temporary, such as session data, reducing storage costs for expired data.

What is the DynamoDB Standard-Infrequent Access (Standard-IA) table class, and how does it differ from the standard table class?

-The Standard-IA table class is a cost-effective option for storing data that is infrequently accessed. It offers 60% lower storage costs compared to the standard table class but with a 25% increase in retrieval costs. There is no performance trade-off, making it a suitable choice for large tables where storage cost reduction is beneficial.

How can the new DynamoDB feature introduced in November 2021 help in managing costs for large tables?

-The introduction of the Standard-Infrequent Access (Standard-IA) table class in November 2021 allows for significant cost savings on storage for large tables that are infrequently accessed. It provides a 60% reduction in storage costs while maintaining the same performance levels as the standard table class.

Outlines

Dieser Bereich ist nur für Premium-Benutzer verfügbar. Bitte führen Sie ein Upgrade durch, um auf diesen Abschnitt zuzugreifen.

Upgrade durchführenMindmap

Dieser Bereich ist nur für Premium-Benutzer verfügbar. Bitte führen Sie ein Upgrade durch, um auf diesen Abschnitt zuzugreifen.

Upgrade durchführenKeywords

Dieser Bereich ist nur für Premium-Benutzer verfügbar. Bitte führen Sie ein Upgrade durch, um auf diesen Abschnitt zuzugreifen.

Upgrade durchführenHighlights

Dieser Bereich ist nur für Premium-Benutzer verfügbar. Bitte führen Sie ein Upgrade durch, um auf diesen Abschnitt zuzugreifen.

Upgrade durchführenTranscripts

Dieser Bereich ist nur für Premium-Benutzer verfügbar. Bitte führen Sie ein Upgrade durch, um auf diesen Abschnitt zuzugreifen.

Upgrade durchführenWeitere ähnliche Videos ansehen

Lecture 23 Part 2 Scaling Relational Databases

Data Replication Algorithms\Techniques

Bagaimana Cara Kerja Hard Disk Drive/HDD ?

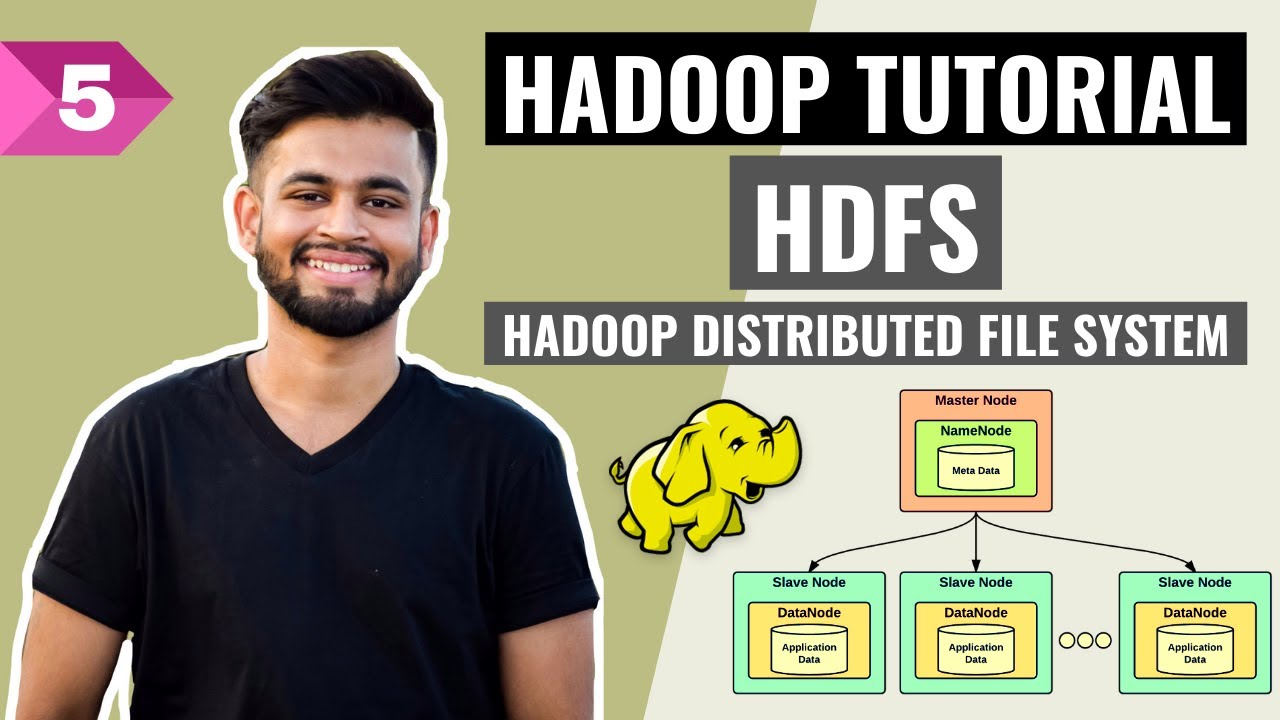

HDFS- All you need to know! | Hadoop Distributed File System | Hadoop Full Course | Lecture 5

Improve DynamoDB Performance with DAX

DS201.12 Replication | Foundations of Apache Cassandra

5.0 / 5 (0 votes)