Deploying a Machine Learning Model (in 3 Minutes)

Summary

TLDRThis video provides valuable advice on successfully deploying machine learning models into production. It covers key considerations such as deployment strategies, serving the model on the cloud or edge, optimizing hardware, and performance monitoring. The video discusses testing methods like AB tests and Canary deployments, optimizing models with compression techniques, and handling traffic patterns efficiently. Additionally, it emphasizes the importance of monitoring model health to detect performance regressions and set up infrastructure for evaluating real-world data. Viewers are encouraged to explore Exponent's machine learning interview prep course for deeper insights.

Takeaways

- 🤖 Deploying machine learning models involves several engineering challenges and decisions, such as whether to run the model on the cloud or the device.

- ⚙️ Optimizing and compiling the model is essential, with different compilers available for various frameworks and hardware combinations like NVCC for Nvidia GPUs or XLA for TensorFlow.

- 📊 It's important to ensure the new model outperforms the current production model using real-world data, which may require AB tests, Canary deployments, feature flags, or shadow deployments.

- 🖥️ Deciding on hardware is crucial: serving the model remotely provides more compute resources but may experience network latency, while edge devices can offer better privacy and efficiency but may limit capacity.

- 📉 Modern techniques like model compression and knowledge distillation can help improve trade-offs between latency, compute resources, and model capacity.

- 🚀 Optimizing models may require techniques such as vectorizing and batching operations to ensure efficient hardware use.

- 🔄 Handling traffic patterns is important—batching predictions can save computational resources, but handling predictions as they arrive may minimize latency.

- 📈 Continuous monitoring of the deployed model is essential to detect performance regressions caused by changing data or user behaviors.

- 🔍 Model performance can be evaluated using hand-labeled datasets or indirect metrics like click rates on recommended posts or videos.

- ⚠️ Monitoring tools should be in place to detect and troubleshoot serving issues such as high inference latency, memory use, or numerical instability.

Q & A

What are the three main components of machine learning (ML) deployment mentioned in the video?

-The three main components of ML deployment are: 1) Deploying the model, 2) Serving the model, and 3) Monitoring the model.

When should you deploy a new machine learning model to production?

-A new model should only be deployed when you are confident that it will perform better than the current production model on real-world data.

What are some methods to test a machine learning model in production before fully deploying it?

-Some methods include AB testing, Canary deployment, feature flags, or shadow deployment.

What factors should be considered when selecting hardware for serving a machine learning model?

-You need to decide whether the model will be served remotely (in the cloud) or on the edge (in the browser or on the device). Remote serving offers more compute resources but may face network latency, while edge serving is more efficient with better security and privacy, but may limit model capacity.

What are some techniques for improving trade-offs between model performance and efficiency?

-Model compression and knowledge distillation techniques can help improve trade-offs between performance and efficiency, especially when serving models on edge devices.

What are some examples of compilers that can be used to optimize machine learning models for specific hardware?

-Examples of compilers include NVCC for Nvidia GPUs with PyTorch, and XLA for TensorFlow models running on TPUs, GPUs, or CPUs.

What are some optimization techniques that might be needed when compiling machine learning models?

-Additional optimizations might include vectorizing iterative processes and batching operations so they can run on the same hardware where the data exists.

How should you handle different traffic patterns when serving a machine learning model?

-Predictions can be batched asynchronously or handled as they arrive. While batching is more efficient for computational resources, handling predictions as they arrive may incur less latency. For traffic spikes, using a smaller, less accurate model or a single model instead of ensembling predictions may be more efficient.

Why is it important to monitor machine learning models after deployment?

-Monitoring is crucial because data and user behaviors change over time, leading to performance regressions. A model that was once accurate may become obsolete, requiring updates or a new model.

What tools or strategies should be implemented to monitor model performance post-deployment?

-You should set up infrastructure to detect data drift, feature drift, or model drift. Also, benchmarking competing models with real-world data, using hand-labeled datasets or indirect metrics like user clicks, can help determine when the model's performance has regressed enough to require intervention.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

#1 Machine Learning Engineering for Production (MLOps) Specialization [Course 1, Week 1, Lesson 1]

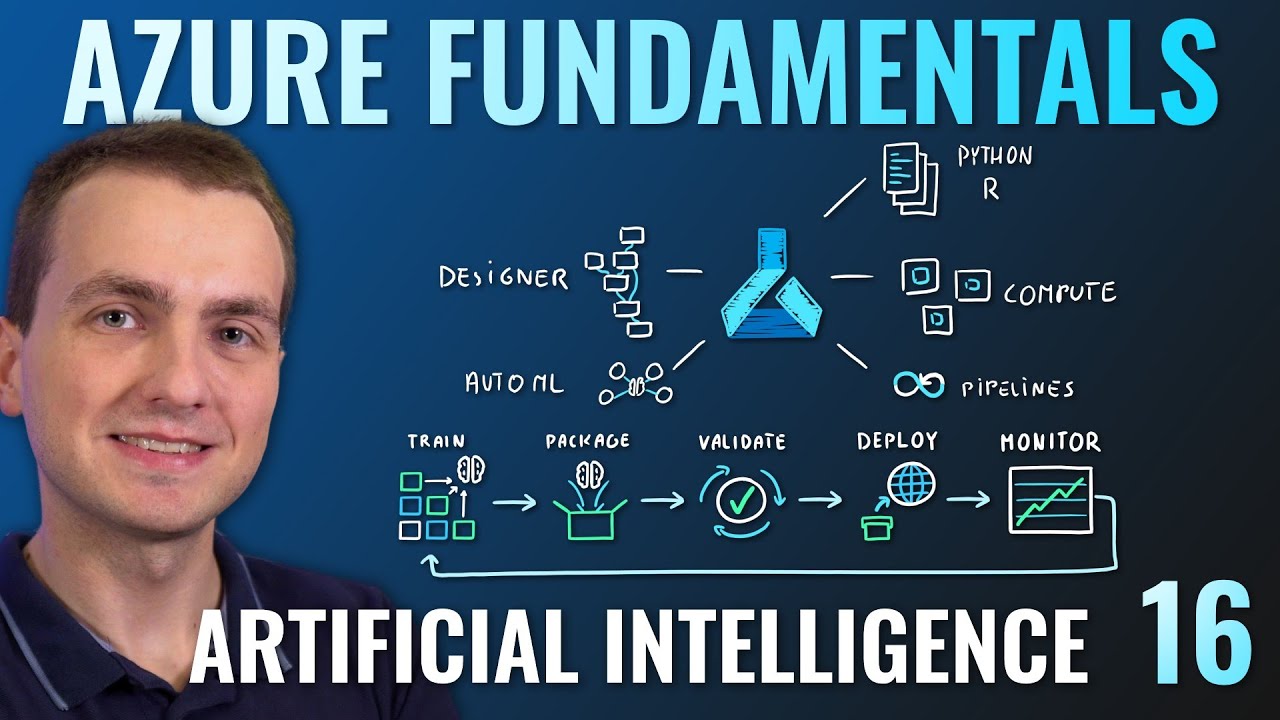

AZ-900 Episode 16 | Azure Artificial Intelligence (AI) Services | Machine Learning Studio & Service

AI & ML Explained in Malayalam

Challenges in Machine Learning | Problems in Machine Learning

PENGANTAR SAINS DATA—DEPLOYING MODEL (KLP 7)

Generative AI For Developers | Generative AI Series

5.0 / 5 (0 votes)