What Is The Resolution Of The Eye?

Summary

TLDRIn this video, Michael from Vsauce explores the comparison between human vision and camera resolution. He explains that while cameras capture static images, human sight is a dynamic process, involving the brain's constant processing of data from the eyes. Although our eyes can differentiate fine details, human vision isn't as digital as pixels. Michael also discusses the limits of human vision, using the example of foveal resolution and blind spots. He concludes with a philosophical reflection on how life doesn't follow the structured narrative of movies, emphasizing life's continuous, unresolved nature.

Takeaways

- 🎥 Hollywood doesn't produce the most films annually—India does, followed by Nigeria.

- 👀 The human eye's resolution isn't comparable to a camera; it uses a complex process to perceive the world.

- 🧠 Our brain processes the information from our eyes, often filling in gaps like blind spots and filtering out unimportant details.

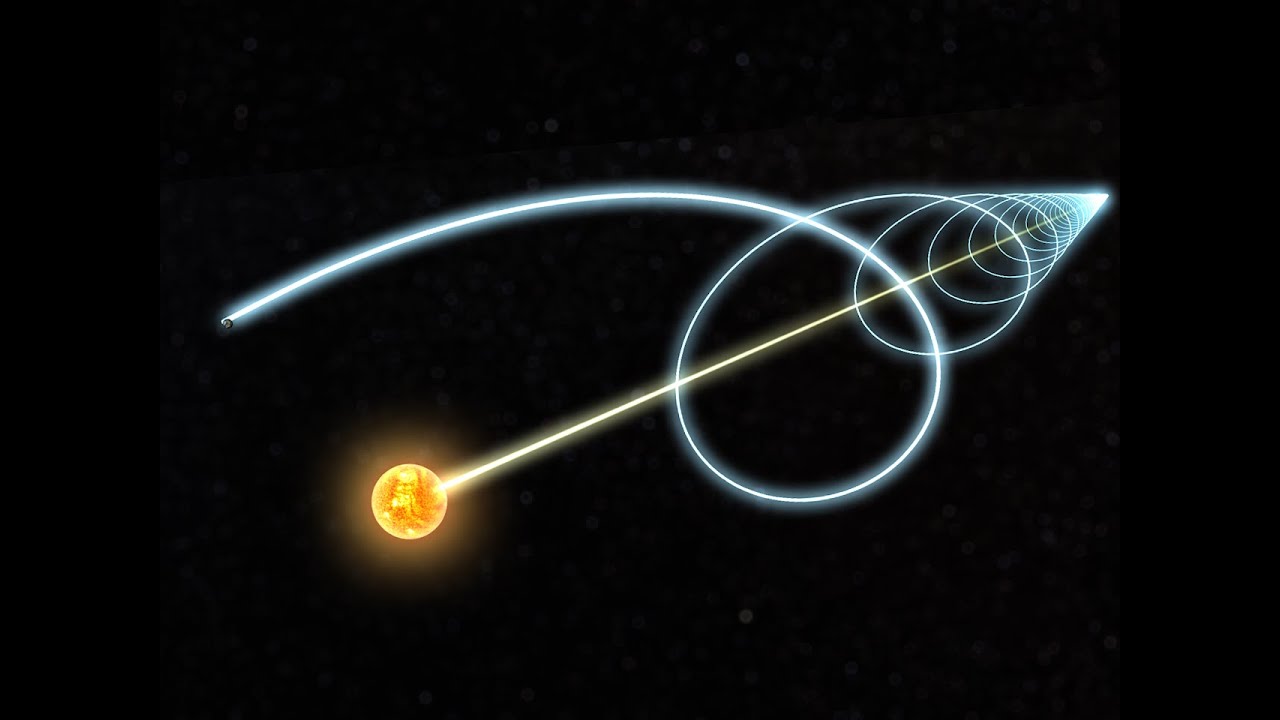

- 🎨 The fovea, which only covers 2 degrees of your field of view, provides optimal vision, with the rest being lower in resolution.

- 📸 Roger N. Clark estimated that the human eye can resolve up to 576 megapixels, but only around 7 megapixels are needed for the fovea's sharp focus.

- 🖼️ Human vision isn't digital, and perception isn't stored as a perfect snapshot like a digital camera file.

- 📺 Technologies like Retina Displays can already fool our eyes, showing that certain pixel densities are beyond what we can differentiate.

- 🎞️ Real life isn't like a movie; conflicts don't have neatly resolved endings, and life is continuous without a narrative resolution.

- 📐 Our visual system is more about continuous perception and top-down processing than discrete pixel resolution.

- 🔄 Life moves on after events in ways that movies can't portray, showing the difference between cinematic and real-life experiences.

Q & A

What countries produce the most feature films annually?

-India produces the most feature films annually, followed by Nigeria. Hollywood in the United States, though famous, does not produce the most.

How does human eye resolution compare to camera or screen resolutions?

-The human eye doesn't function like a camera. While camera resolutions are measured in pixels, the eye processes information differently, combining signals from various parts of the field of view. An estimate suggests the human eye has a resolution of about 576 megapixels, but this analogy is crude.

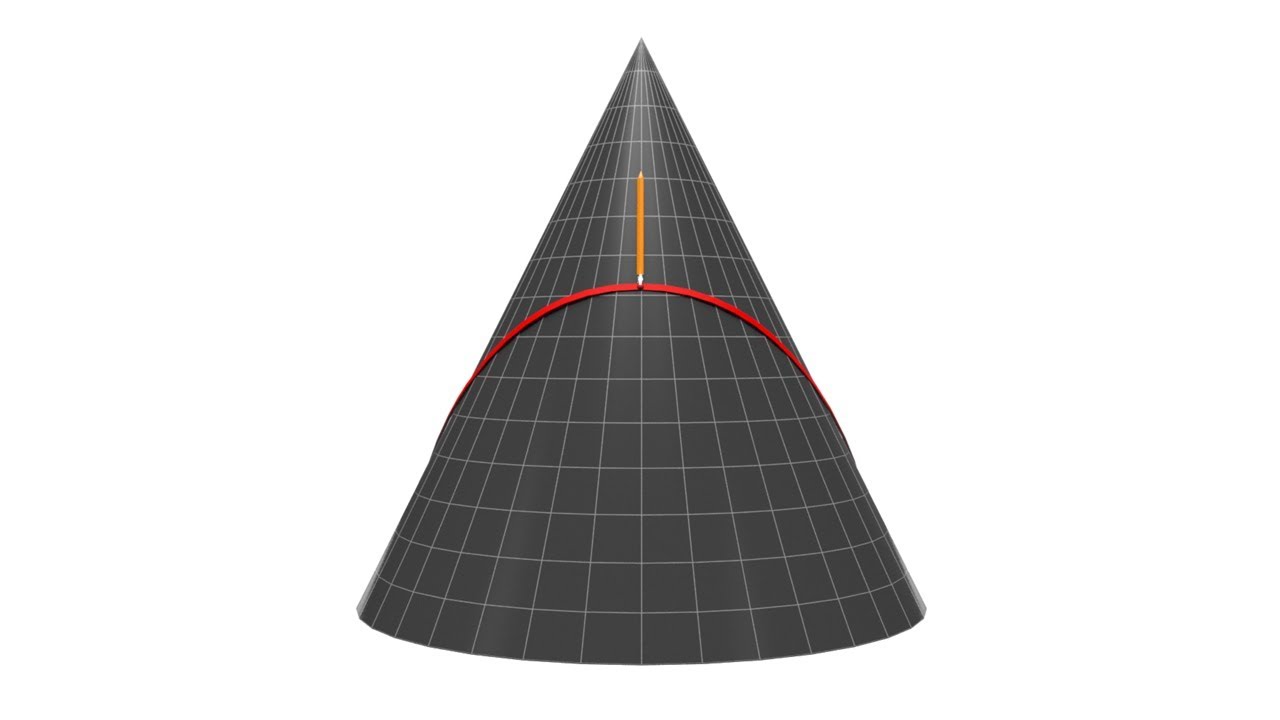

What is spatial resolution, and how does it affect how we see details?

-Spatial resolution refers to the ability to distinguish between nearby pixels or fine details. The more distinct adjacent pixels are, the more details can be resolved. Factors like lighting, sensor size, and how close the subject is all affect resolution.

Why do we not see our noses or glasses in our field of vision?

-The brain filters out unchanging stimuli, such as our noses or glasses, because they are not important for processing new visual information.

What is the role of the fovea in vision?

-The fovea is a small pit in the retina that handles central vision, providing optimal color vision and 20/20 acuity. It only covers about two degrees of our field of view.

How does the brain process blind spots in our vision?

-The brain fills in details and merges information from both eyes, compensating for the blind spots caused by where the optic nerve connects to the retina.

How many megapixels would be required to display an image indistinguishable from reality?

-If we consider the entire field of view, around 576 megapixels would be needed to create an image that the average human eye can't differentiate from reality. However, for the central vision processed by the fovea, only about 7 megapixels are required.

Why doesn't human vision work like a digital camera?

-Unlike a camera that captures static frames, our eyes constantly move, and the brain merges all the visual inputs into a processed perception. The resulting image is not made of pixels but is a dynamic, top-down interpretation of our surroundings.

Can humans have photographic memory?

-No scientific evidence supports the existence of photographic memory. Human memory is not stored with the same accuracy as a digital camera file.

What is the difference between life and movies in terms of narrative resolution?

-Unlike movies that often have clear beginnings, conflicts, and resolutions, life is continuous and doesn't resolve in neat, discrete ways. Life keeps going even after key moments pass.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآن5.0 / 5 (0 votes)