Building a Multi-tenant SaaS solution on AWS

Summary

TLDRThe presenter provides best practices for building a multi-tenant SaaS solution on AWS using serverless technologies. Key topics covered include SaaS architecture patterns like application and control planes, deployment models like silos vs resource pools, authentication, authorization and access control with Amazon Cognito and AWS IAM, API throttling with usage plans and API keys, dynamically injecting tenant IDs into IAM policies at runtime for tenant isolation, CI/CD pipelines for consistent deployments across environments, and more.

Takeaways

- 😊 The talk focuses on best practices for building a SaaS solution on AWS using serverless architecture

- 💡 The application plane contains the core IP/service offering, while the control plane manages operational aspects

- 📝 Registration and onboarding of new tenants is handled by a separate microservice

- 🔐 Authentication uses API Gateway authorizers, API Keys, Usage Plans and Cognito to handle multi-tenant security

- 🚦 Tenant isolation in the pool model is achieved using IAM dynamic policies injected at runtime

- ⚙️ The CI/CD pipeline ensures consistent deployment across shared and dedicated tenant resources

- 🌐 A tenant routing mechanism redirects requests to the appropriate API Gateway based on tenant ID

- 🎚 API Keys allow throttling incoming requests at the tenant level to prevent abuse

- 💵 Tiered pricing models influence provisioning and resource sharing strategies

- 🗄 Row-level security in DynamoDB helps restrict tenant data access in the pool model

Q & A

What are the two main components of a SaaS application architecture?

-The two main components are the application plane, which is the core IP and service offering, and the control plane, which manages deployment, security, metrics collection etc.

What are the two typical deployment models for the application plane?

-The two models are silo, where each tenant has dedicated infrastructure, and pool, where infrastructure is shared across tenants. The reference architecture uses a hybrid approach.

How does the application handle authentication and authorization?

-It uses Amazon Cognito for authentication, generating JWT tokens. The tokens are validated by a Lambda authorizer, which also handles throttling based on usage plans and applies authorization policies.

How is tenant isolation achieved?

-For pooled resources, dynamic IAM policies at runtime restrict access to only a tenant's data rows. For siloed resources, Lambda execution roles limit access to dedicated DB tables.

How are infrastructure resources provisioned when onboarding new tenants?

-A registration service handles tenant onboarding workflows including creation of admin users in Cognito, allocating API keys, and conditionally invoking a provisioning service to deploy dedicated resources for high tier tenants.

What is the benefit of using Lambda layers?

-Lambda layers allow reuse of common logic around metrics, logs, and authentication across Lambda functions, avoiding code duplication.

How does the architecture support canary deployments?

-It uses CodePipeline to shift traffic between Lambda function versions. This allows testing a new version before routing all traffic to it.

How are costs attributed across tenants in a pooled deployment?

-There is a dedicated lab on implementing tagging and CloudWatch metrics to attribute resource utilization and costs back to specific tenants.

What mechanisms handle routing of tenants to the appropriate backend resources?

-Tenants provide their tenant name in the UI. An API looks up the relevant API Gateway URL, Cognito user pool etc. to route requests.

How does the CI/CD pipeline ensure consistency across environments?

-It uses the tenant DB table as source of truth to deploy builds across all tenant stacks and pooled resources in one pass.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

Microservices architecture on AWS #aws #amazonwebservices #microservices #devops

Crack the AWS Lambda Code: Top 10 AWS Lambda Interview Questions with Answers Revealed!

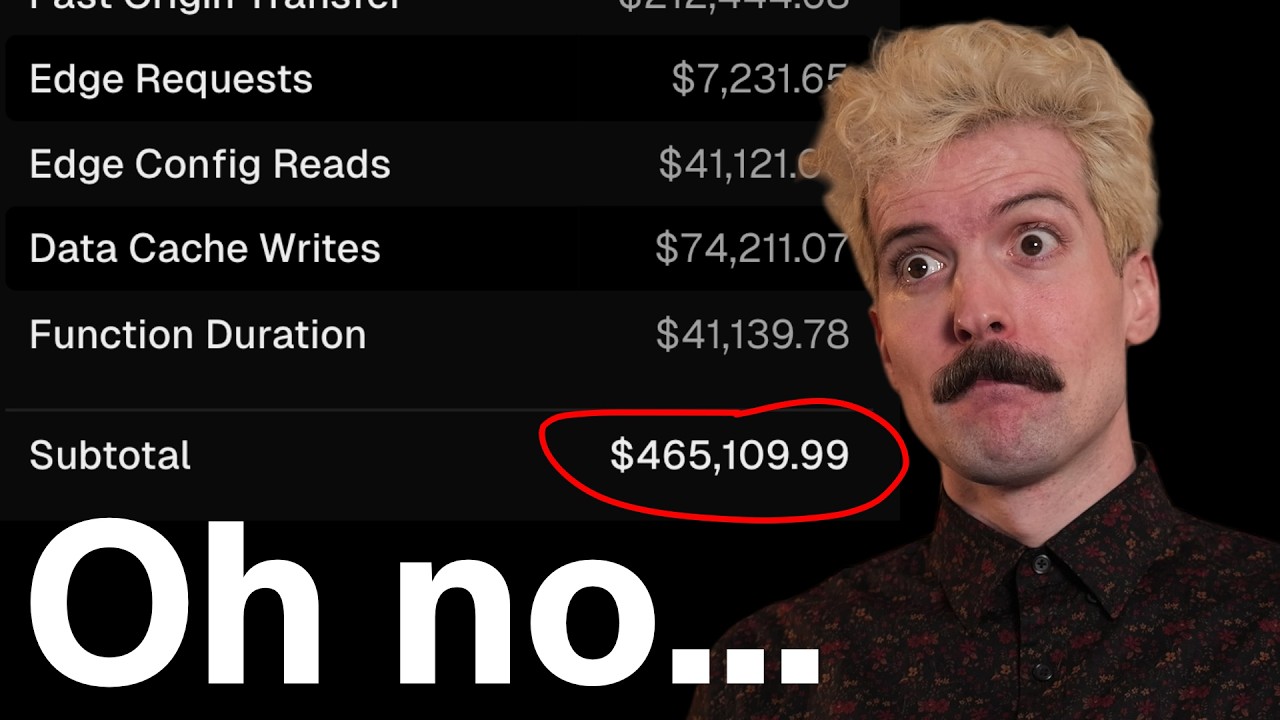

How To Avoid Big Serverless Bills

AWS Cloud Practitioner Exam Questions | CLF - C02 | Tutorial - 08 | Tech India |

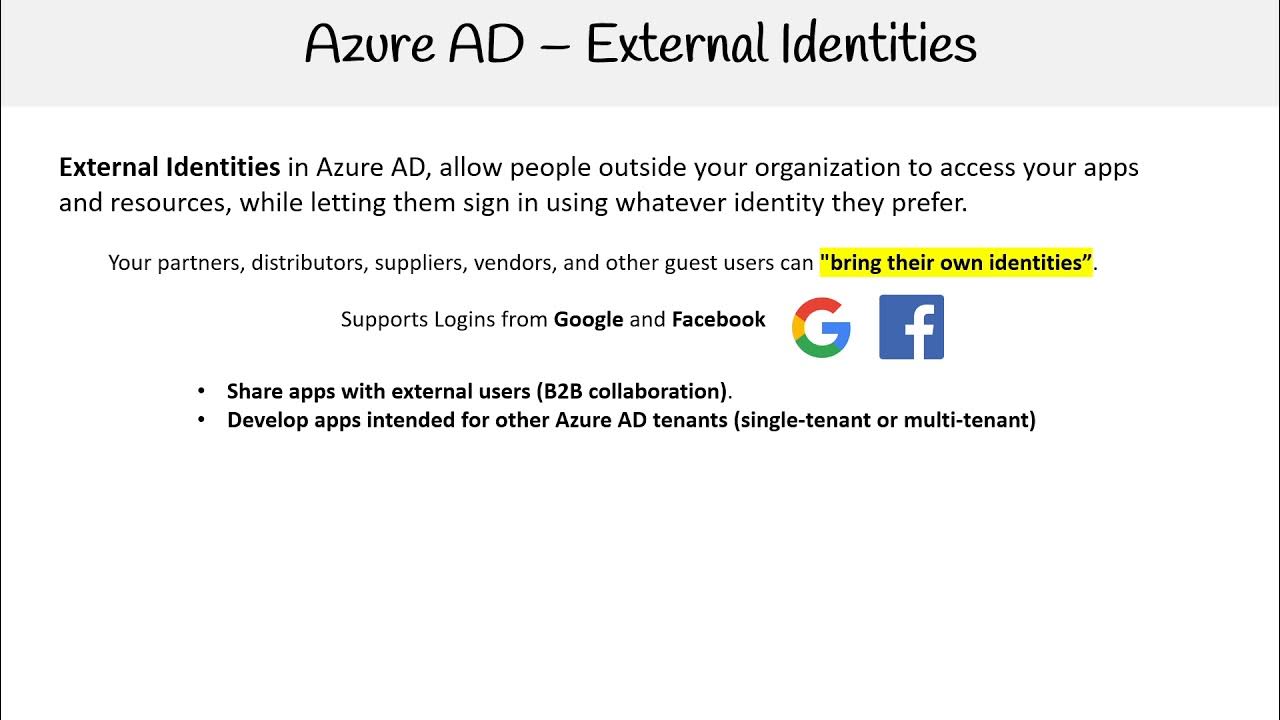

AZ 104 — External Identities

Building a Serverless Data Lake (SDLF) with AWS from scratch

5.0 / 5 (0 votes)