Kaggle Winning Solution Series: Rossman store sales forecast

Summary

Please replace the link and try again.

Please replace the link and try again.

Q & A

What is the main task in the Grossman store sales prediction competition?

-The main task is to predict the daily sales of approximately 100 European drugstores for up to six weeks, using historical sales data and additional influencing factors such as promotions, holidays, and competition.

Which factors are included in the dataset that can influence store sales?

-The dataset includes historical sales, customer count, store open/closed status, state and school holidays, store metadata (type, assortment, competition proximity), promotion information, and external data like weather (temperature and precipitation).

What evaluation metric is used in the competition?

-The competition uses Root Mean Squared Percentage Error (RMSPE), which measures relative errors by normalizing the difference between predicted and actual sales by the actual sales value.

Why is feature engineering important in this competition?

-Feature engineering is critical because it allows participants to extract meaningful patterns and relationships from the historical sales data, store metadata, promotions, holidays, and external factors, which significantly improves model performance.

What types of features were extracted in the first-place solution?

-Features were grouped into five categories: recent data statistics (mean, median, deviation, percentiles), temporal information (day of week, event proximity, number of holidays), trends (linear trends per store), store metadata (assortment, competition info, sales ratios), and external data (weather).

What modeling approach was primarily used in the winning solution?

-The winning solution primarily used Gradient Boosting Machines (GBM), such as XGBoost, combined with extensive feature engineering and selection methods.

How did the first-place solution perform feature selection?

-Feature selection was performed by training multiple models with random subsets of features, validating them on different periods, selecting the best models, and combining the features from these models into a final set.

What are entity embeddings, and how were they used in the third-place solution?

-Entity embeddings are low-dimensional representations of high-cardinality categorical features. In the third-place solution, they reduced variables like store ID from thousands of values to a 10-dimensional vector, which was then used as input for models like k-NN, Random Forest, or GBMs to improve predictions.

Why is it important to evaluate time series data using chronological splits rather than random splits?

-Using chronological splits ensures that models are tested on the latest data, mimicking real-world forecasting conditions, whereas random splits can give overly optimistic results by mixing future information into training.

What role did weather and holiday data play in improving sales predictions?

-Weather and holiday data provided additional context for daily sales fluctuations. For example, bad weather may reduce sales, while holidays and promotions can increase sales. Including these features helped the model better capture real-world variations.

How did log transformation and multiplier adjustments improve predictions?

-The target sales variable was log-transformed to stabilize variance and improve model learning. After predictions, a multiplier (e.g., 0.985–0.99) was applied to adjust for systematic bias, which slightly improved the RMSPE and leaderboard rankings.

What is the key insight regarding the effectiveness of feature engineering versus luck in leaderboard rankings?

-While thorough feature engineering improves model performance, small improvements can make a significant difference in leaderboard rankings, meaning that luck or fine-tuning of multipliers can also play a role in achieving top positions.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

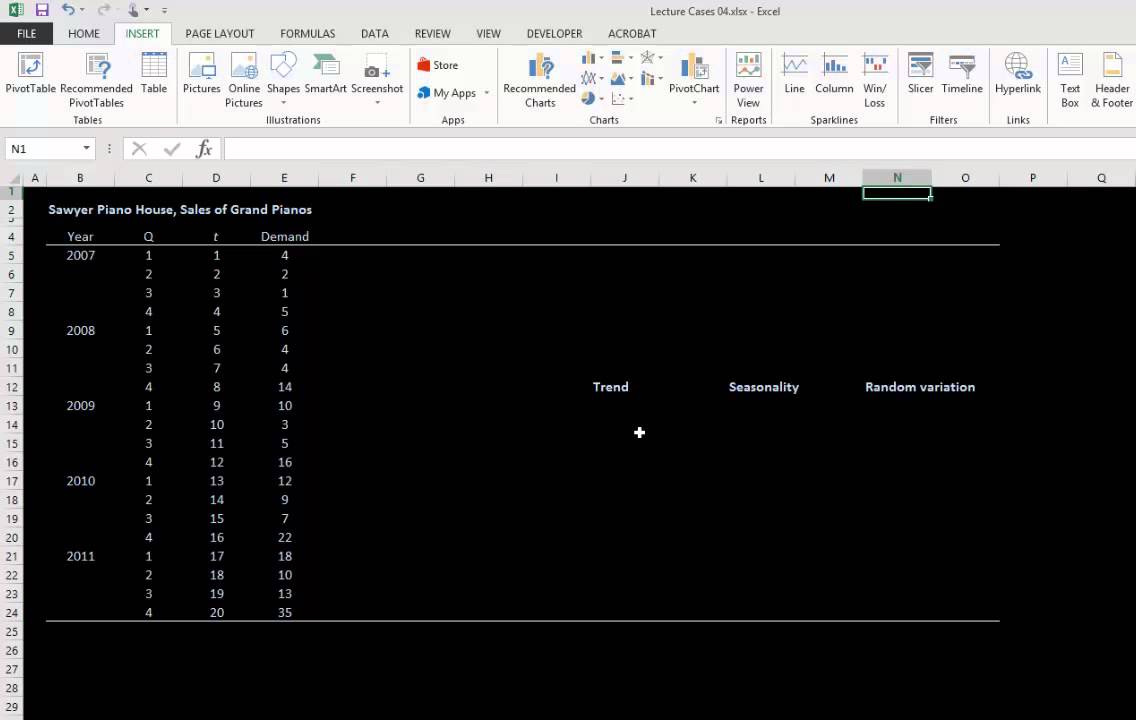

Decompostion1

Statistical Analysis Project Group(15)

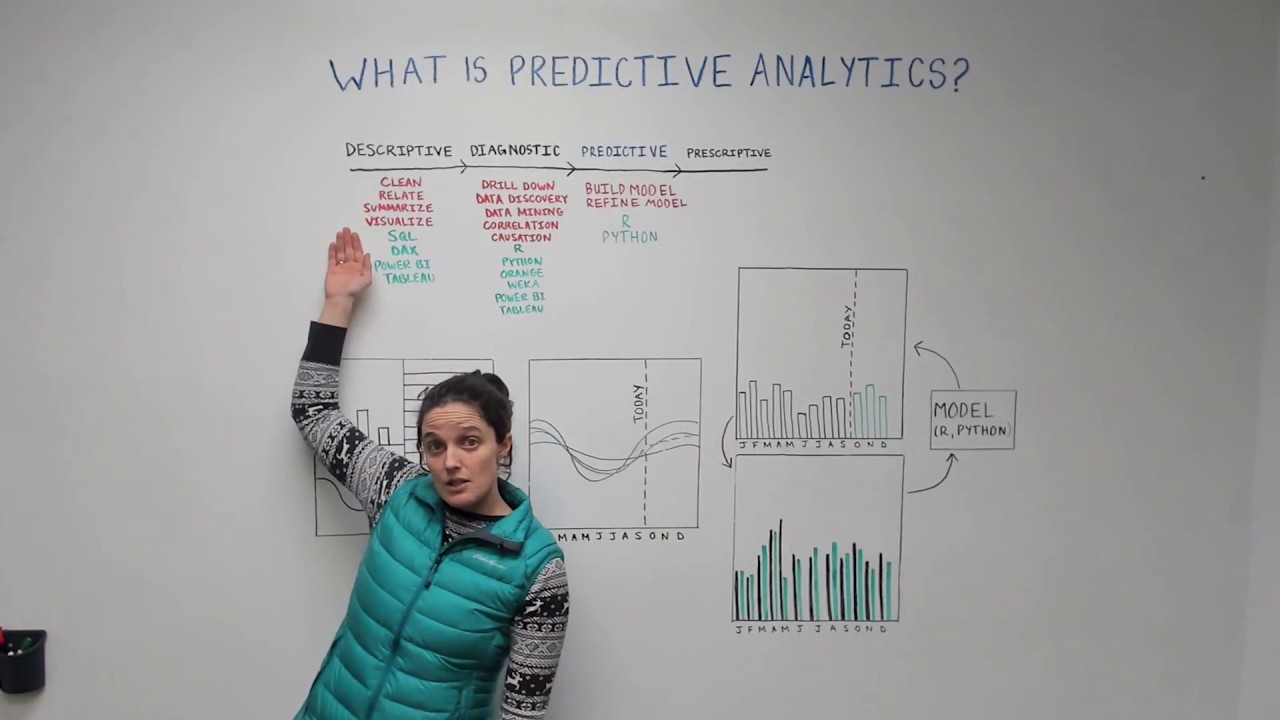

The Fundamentals of Predictive Analytics - Data Science Wednesday

$0 TO $1,000+ in 7 DAYS with Shopify Dropshipping

Easiest Way To Start Dropshipping in 2025

How I Actually Find Winning Products as a Dropshipping Beginner

I Went From $0 - $3000/Day Dropshipping In 7 Days using AI

5.0 / 5 (0 votes)