🔥 NEW LLama Embedding for Fast NLP💥 Llama-based Lightweight NLP Toolkit 💥

Summary

TLDRThe video introduces Word Lama, a lightweight NLP toolkit that enhances efficiency in natural language processing tasks. It utilizes components from large language models to create compact word representations, significantly reducing model size while maintaining performance. Key features include similarity scoring, document ranking, and fuzzy duplication, making it ideal for business-critical tasks. The video also demonstrates a Gradio application for interactively exploring Word Lama's capabilities.

Takeaways

- 🔍 The script introduces 'Word Lama', a new lightweight NLP toolkit designed for efficiency and compactness in natural language processing tasks.

- 🌟 NLP tasks such as finding sentence similarity, fuzzy duplication, and semantic search are crucial for various business applications and data science projects.

- 📦 Word Lama utilizes components from large language models to create efficient and compact word representations, like GloVe and fastText.

- 🚀 Word Lama's model is substantially smaller compared to other models, with a 256-dimensional model being only 16MB, making it highly efficient for resource-limited environments.

- 🏆 The toolkit improves on the MTB Benchmark, which evaluates embedding models, showcasing its effectiveness against popular models like Sentence-BERT and GloVe.

- 📈 Word Lama offers various functionalities like similarity scoring, re-ranking, and de-duplication, which are essential for tasks such as IT service management and e-commerce.

- 🛠️ The script demonstrates practical applications of Word Lama through a Gradio demo deployed on Hugging Face Spaces, allowing users to interact with the model.

- 📝 The model's small size and speed make it suitable for real-time applications, where quick processing of NLP tasks is necessary.

- 🔧 The script provides insights into the model's training process, mentioning that it was trained on a single A100 GPU for 12 hours, highlighting the model's optimization.

- 🌐 The creator encourages viewers to experiment with the model through the provided Gradio application and share their feedback, promoting community engagement with the project.

Q & A

What is Word Lama and what does it offer?

-Word Lama is a lightweight NLP toolkit that provides a utility for natural language processing and word embedding models. It recycles components from large language models to create efficient and compact word representations.

What is the significance of NLP in the context mentioned?

-In the context, NLP (Natural Language Processing) involves tasks like finding similarity between sentences, fuzzy duplication, and other language-related tasks. It's crucial for efficiency and accuracy in business-critical applications.

How does Word Lama improve upon existing models?

-Word Lama improves upon existing models by offering a smaller, more efficient model that performs well on MTB Benchmark evaluations. It is substantially smaller in size compared to other models like GloVe, making it more suitable for business use cases that require speed and nimbleness.

What is the size difference between Word Lama and GloVe 300D?

-Word Lama's model is just 16 MB for 256 dimensions, whereas GloVe 300D is greater than 2GB, making Word Lama significantly smaller and more lightweight.

What are some of the tasks that Word Lama can assist with?

-Word Lama can assist with tasks such as similarity finding, semantic search, reranking, classification, clustering, and fuzzy duplication, which are essential for various applications like IT service management and e-commerce.

How does Word Lama utilize components from large language models?

-Word Lama extracts the token embedding codebook from a state-of-the-art language model and trains a small contextless model in a general-purpose embedding framework, resulting in a compact and efficient model.

What is the role of embeddings in Word Lama?

-Embeddings in Word Lama serve as numerical representations of words that can be used for various NLP tasks. They are created from recycled components of large language models and are optimized for efficiency and compactness.

How can users interact with Word Lama through the Gradio application?

-Users can interact with Word Lama through a Gradio application that allows them to perform tasks like calculating similarity scores between sentences, ranking documents, and performing fuzzy duplication, all within an easy-to-use interface.

What is the significance of the benchmark scores mentioned in the script?

-The benchmark scores are significant as they indicate how well Word Lama performs compared to other models in various NLP tasks. These scores help users understand the model's effectiveness and suitability for their specific use cases.

How does Word Lama's model size impact its practical applications?

-Word Lama's small model size allows for faster processing and lower resource requirements, making it ideal for real-time applications and environments with limited computational resources.

Outlines

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифMindmap

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифKeywords

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифHighlights

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифTranscripts

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифПосмотреть больше похожих видео

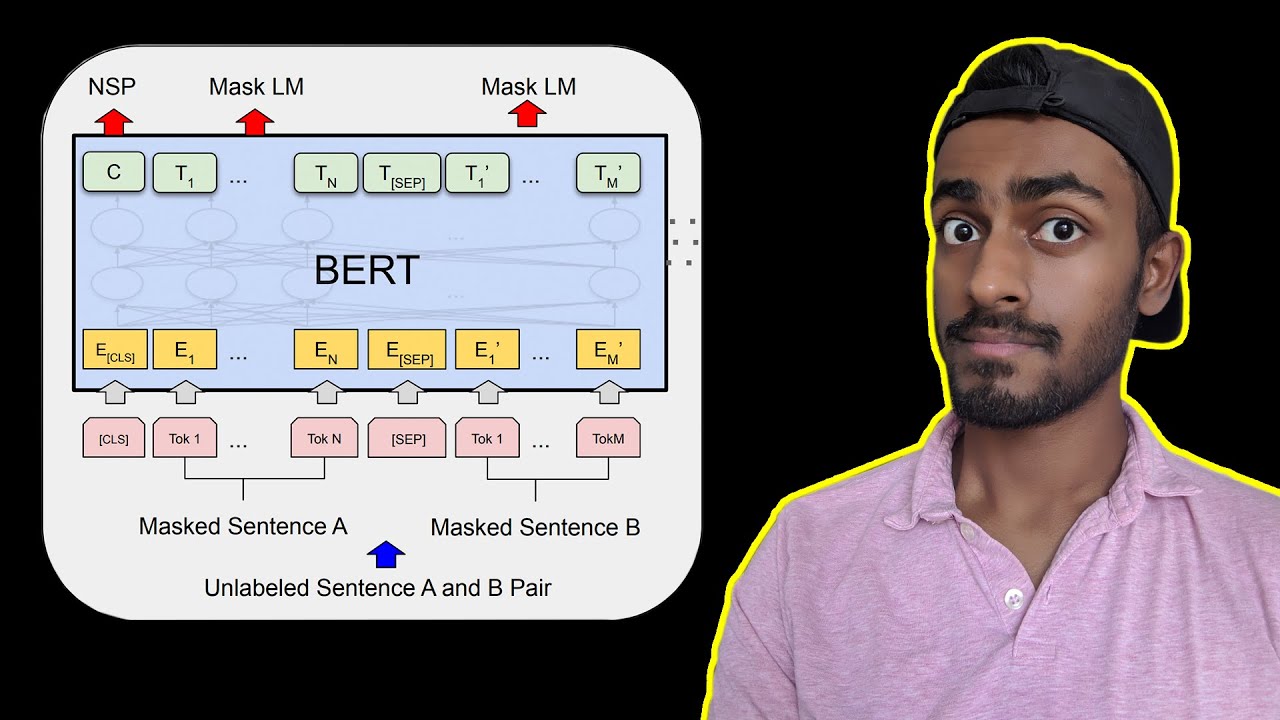

BERT Neural Network - EXPLAINED!

Stop Words: NLP Tutorial For Beginners - S2 E4

Natural Language Processing: Crash Course AI #7

Introduction to Natural Language Processing in Hindi ( NLP ) 🔥

Generative AI Vs NLP Vs LLM - Explained in less than 2 min !!!

Natural Language Processing (Part 1): Introduction to NLP & Data Science

5.0 / 5 (0 votes)