Practical Intro to NLP 23: Evolution of word vectors Part 2 - Embeddings and Sentence Transformers

Summary

TLDRThis script discusses the evolution of word and sentence vector algorithms in natural language processing (NLP). It highlights the transition from TF-IDF for document comparisons to dense vector representations like Word2Vec, which addressed the limitations of sparse vectors. The script also covers the introduction of algorithms like Sense2Vec for word sense disambiguation and contextual embeddings like ELMo. It emphasizes the significance of sentence transformers, which provide context-aware embeddings and are currently state-of-the-art for NLP tasks. The practical guide suggests using TF-IDF for high-level document comparisons and sentence transformers for achieving state-of-the-art accuracies in NLP projects.

Takeaways

- 📊 Understanding the evolution of word and sentence vector algorithms is crucial for natural language processing (NLP).

- 🔍 The pros and cons of each vector algorithm should be well understood for practical application in NLP tasks.

- 📚 A baseline understanding of algorithms from TF-IDF to sentence Transformers is sufficient for many practical applications.

- 🌟 Word2Vec introduced dense embeddings, allowing for real-world operations like analogies to be performed in vector space.

- 🕵️♂️ Word2Vec's limitation is its inability to differentiate between different senses of a word, such as 'mouse' in computing vs. a rodent.

- 📈 Sense2Vec improves upon Word2Vec by appending parts of speech or named entity recognition tags to words, aiding in disambiguation.

- 📉 FastText addresses out-of-vocabulary words by dividing words into subtokens, but still has limitations in word sense disambiguation.

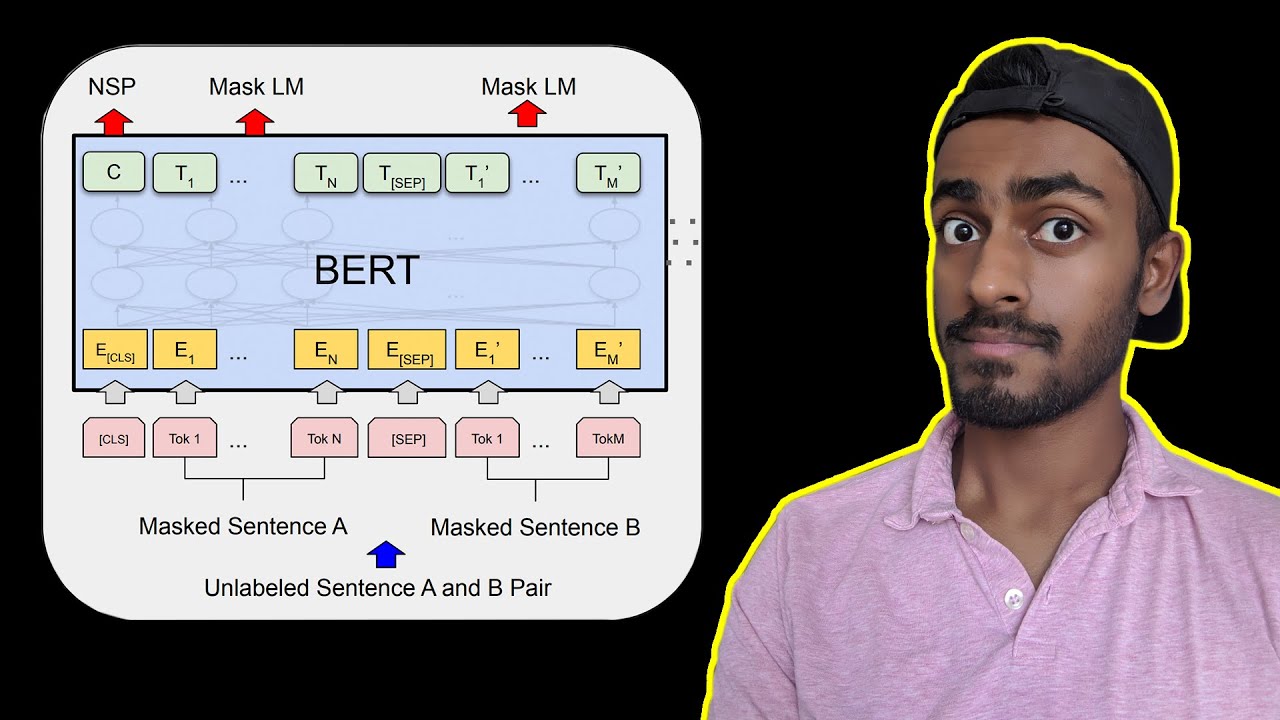

- 🌐 Contextual embeddings like ELMo and BERT capture the context of words better than previous algorithms, improving word sense disambiguation.

- 📝 Sentence embeddings, such as those from sentence Transformers, provide a more nuanced representation by considering word importance and context.

- 🏆 State-of-the-art sentence Transformers can handle varying input lengths and generate high-quality vectors for words, phrases, sentences, and documents.

- 🛠️ For lightweight tasks, TF-IDF or Word2Vec might suffice, but for state-of-the-art accuracy, sentence Transformers are recommended.

Q & A

What is the primary purpose of understanding the evolution of word and sentence vectors?

-The primary purpose is to grasp the strengths and weaknesses of various word and sentence vector algorithms, enabling their appropriate application in natural language processing tasks.

How does the Word2Vec algorithm address the problem of sparse embeddings?

-Word2Vec provides dense embeddings by training a neural network to predict surrounding words for a given word, which results in similar words having closer vector representations.

What is a limitation of Word2Vec when it comes to differentiating word senses?

-Word2Vec struggles to differentiate between different senses of a word, such as 'mouse' referring to a computer mouse or a house mouse.

How does the Sense2Vec algorithm improve upon Word2Vec?

-Sense2Vec appends parts of speech or named entity recognition tags to words during training, allowing it to differentiate between different senses of a word.

What is the main issue with using averaged word vectors for sentence embeddings?

-Averaging word vectors does not capture the importance or context of individual words within the sentence, resulting in a loss of nuance.

How do contextual embeddings like ELMo address the shortcomings of Word2Vec and Sense2Vec?

-Contextual embeddings like ELMo provide word vectors that are sensitive to the context in which they appear, thus improving word sense disambiguation.

What is the role of sentence transformers in generating sentence vectors?

-Sentence transformers generate sentence vectors by considering the context and relationships among words, resulting in vectors that better represent the meaning of sentences.

How do algorithms like Skip-Thought Vectors and Universal Sentence Encoder improve upon traditional word vectors?

-These algorithms focus on generating sentence or document vectors directly, aiming to capture the overall meaning rather than averaging individual word vectors.

What is the significance of using sentence transformers for state-of-the-art NLP projects?

-Sentence transformers are currently the state of the art for generating high-quality sentence vectors, which are crucial for achieving high accuracy in NLP tasks.

How can dense embeddings from algorithms like sentence transformers be utilized for document comparison?

-Dense embeddings can be used to convert documents into vectors, allowing for efficient comparison and retrieval of similar documents in vector databases.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

WML और MLM में अंतर क्या है? जानिए आसान भाषा में @Directsellingsuccessguaranty

BERT Neural Network - EXPLAINED!

10. Word Sense Disambiguity (WSD) | Dictionary Based Approach for Word Sense Disambiguity | NLP

The History of Natural Language Processing (NLP)

NLP vs NLU vs NLG

Natural Language Processing: Crash Course AI #7

5.0 / 5 (0 votes)