Object Detection using OpenCV Python in 15 Minutes! Coding Tutorial #python #beginners

Summary

TLDRThis tutorial introduces viewers to object detection using OpenCV and Python. The presenter demonstrates how to install necessary libraries, access a camera feed, and identify various objects in real-time. The video also covers how to use the gtts and play sound libraries to make the computer vocalize the detected objects, creating an interactive and informative experience for the audience.

Takeaways

- 😀 The tutorial focuses on object detection using OpenCV, a popular computer vision library.

- 🛠️ The presenter guides through the installation of necessary libraries, including `opencv-contrib-python`, `cvlib`, `gtts`, and `play-sound`.

- 🔎 `opencv-contrib-python` is preferred over `opencv-python` for its additional libraries that enhance functionality.

- 📱 The script demonstrates real-time object detection using the computer's webcam, identifying various objects like an apple, orange, and cell phone.

- 🎯 The `cvlib` library is utilized for its pre-trained models to recognize common objects within the video frames.

- 🗣️ The tutorial includes a feature to convert detected objects into spoken words using Google Text-to-Speech (gtts).

- 🔊 The `play-sound` library is integrated to efficiently play the synthesized speech.

- 📝 The script maintains a list of unique detected objects to avoid repetition in the output.

- 📑 The tutorial concludes with a function that converts the list of detected objects into a natural-sounding sentence and plays it aloud.

- 🎉 The presenter encourages user interaction through comments, likes, and subscriptions for further tutorials.

Q & A

What is the main focus of the tutorial?

-The main focus of the tutorial is to demonstrate how to use OpenCV for object detection, allowing the computer to identify and announce different objects seen through a camera feed.

Why is OpenCV-contrib-python used instead of OpenCV-python?

-OpenCV-contrib-python is used because it contains additional libraries beyond the basic modules of OpenCV-python, providing more functionality for advanced tasks such as object detection.

What libraries are installed for object detection in the tutorial?

-The tutorial installs 'opencv-contrib-python', 'cvlib', 'gtts', and 'play-sound' libraries to handle object detection, text-to-speech conversion, and audio playback, respectively.

How does the tutorial handle real-time object detection?

-The tutorial accesses the camera using 'cv2.VideoCapture' and processes each frame in a loop to detect objects in real-time, then draws boxes and labels around the detected objects.

What function is used to draw boxes around detected objects?

-The 'draw_box' function from 'cvlib.object_detection' is used to draw boxes around the detected objects in the video frames.

How is the list of detected objects managed to avoid duplicates?

-The tutorial uses a for loop to check if an item is already in the 'labels' list before appending it, ensuring each object is only announced once.

What is the purpose of the 'speech' function defined in the tutorial?

-The 'speech' function is used to convert the list of detected objects into a natural-sounding sentence and then use the 'gtts' library to convert the text into speech.

How does the tutorial ensure a more natural pause in the spoken output?

-The tutorial uses string interpolation to create a sentence with 'and' and commas, which are then joined into a single string to ensure natural pauses when the computer speaks the detected objects.

What is the significance of creating a 'sounds' directory in the project?

-The 'sounds' directory is created to store the audio files generated by the 'gtts' library, which are then played using the 'play-sound' library.

How does the tutorial handle user interaction to stop the object detection process?

-The tutorial uses a 'cv2.waitKey' function to check if the user presses the 'q' key, which, if pressed, breaks the loop and stops the object detection process.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

Real Time Sign Language Detection with Tensorflow Object Detection and Python | Deep Learning SSD

25 - Reading Images, Splitting Channels, Resizing using openCV in Python

Object Detection Using OpenCV Python | Object Detection OpenCV Tutorial | Simplilearn

Measure Size of Object in Images ACCURATELY using OpenCV Python

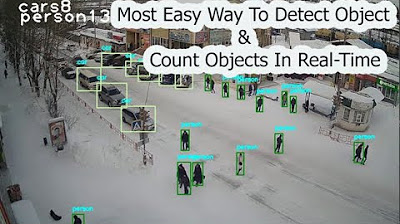

Most Easy Way To Object Detection & Object Counting In Real Time | computer vision | python opencv

What is OpenCV with Python🐍 | Complete Tutorial [Hindi]🔥

5.0 / 5 (0 votes)